Imitation Learning, Physical AI, TeleRobotics, Teleoperation

Imitation Learning

Source : https://pressroom.toyota.com/toyota-research-institute-unveils-breakthrough-in-teaching-robots-new-behaviors/

“Our research in robotics is aimed at amplifying people rather than replacing them,” said Gill Pratt, Chief Scientist for Toyota Motor Corporation. “This new teaching technique is both very efficient and produces very high performing behaviors, enabling robots to much more effectively amplify people in many ways. Previous state-of-the-art techniques to teach robots new behaviors were slow, inconsistent, inefficient, and often limited to narrowly defined tasks performed in highly constrained environments.”

Source : https://pressroom.toyota.com/toyota-research-institute-unveils-breakthrough-in-teaching-robots-new-behaviors/

Force feedback stands at the core of the next generation of intelligent robotic systems. As a key enabler for imitation learning, Physical AI, telerobotics, and teleoperation, it provides the essential sensory interface between human expertise and machine intelligence. By capturing and reproducing fine force interactions with high fidelity, it allows AI systems to learn dexterous manipulation, adapt to unstructured physical environments, and extend precise human control across distance. At the convergence of advanced robotics, haptics, and machine learning, force feedback is shaping the technological foundation of truly embodied intelligence.

Benchmark Scenarios & Demonstrations

Single Arm + Glove

Source : https://www.senseglove.com/project-rembrandt/

Dual Arms + Gloves

Source : IEEE telepresence | TNO

Humanoid

Source : https://www.i-botics.com/projects/xprize/

Virtual

Source : Haption

High-quality sense-of-touch data is becoming a fundamental requirement for robotics imitation learning, especially as robots must accurately reproduce human skills. Modern robot learning from demonstration systems increasingly depend on detailed sense-of-touch information to generate reliable behavioral cloning in robotics and robust robot skill learning. As human-robot learning advances, high performance haptic feedback such as force feedback device enables more stable robot policy learning and improves the consistency of demonstration-based robotics in both real-world and simulated-world environments.

Advances in inverse reinforcement learning in robotics, generative models for robot imitation, and diffusion models for robot control are transforming how robots acquire new skills. Emerging foundation models for robotics, offline imitation learning, and policy cloning for robotics enable greater autonomy, while techniques such as kinesthetic teaching for robots, high-quality demonstration datasets for robotics, and robust robot motion imitation push real-world performance forward. These innovations are increasingly critical for industrial robotics imitation learning, surgical robotics, service robots, robotic assembly, human-robot collaboration across sectors.

They also contribute to the rise of physical AI: systems that tightly couple perception, reasoning, and embodied action in the real world. This includes multimodal world models for robots, simulation-to-real transfer, large-scale robot learning from internet and teleoperation data, and foundation models that integrate vision, language, and control. Such capabilities allow robots to generalize across tasks, interact safely with humans, adapt to unstructured environments.

Discover how Haption’s products make enable to work efficiently on these challenges.

Real-time data streaming up to 1 kHz

Data collection from a high-performance haptic force-feedback teleoperation system

Designed to be compatible with all industrial and humanoid robots

Single Arm and Dual Arms setup.

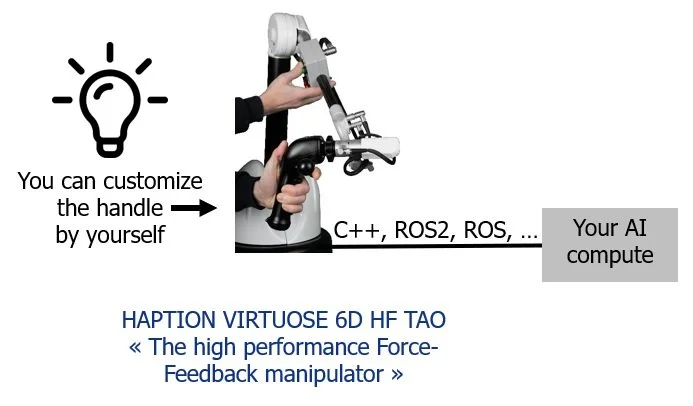

CONFIGURATION #1. DIY

For robotics, haptics, and real-time control experts.

Maximum flexibility and customization (C++, ROS, ROS2, …)

Full control over algorithms and system performance

Ideal for pushing the performance to the limits

Illustration of the “DIY” configuration ROS2 for example

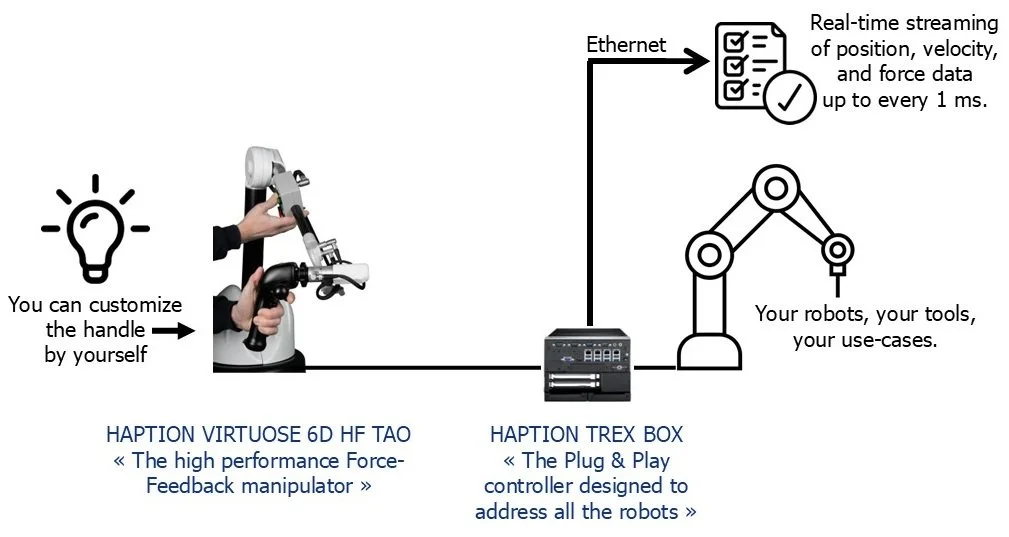

CONFIGURATION #2. Plug & Play Standard or Customized

For AI, algorithm and real-time data collection experts.

Immediate access to real-time data : position, velocity, and force

Rapid deployment bundle with no complex integration

Focus entirely on algorithm development and imitation learning

Illustration of the “Plug & Play” configuration to enable real time data collection.

Others architectures are available, please contact us.

Adopt our technologies, accelerate your projects!

Partner Spotlight: SenseGlove

Our technology, combined with our partner’s haptic glove, creates a fully integrated haptic system that delivers a complete and immersive user experience.

By unifying tactile feedback at the hand with kinesthetic force feedback from the fingers to the shoulder, the system provides a continuous and realistic sense of physical interaction across the entire upper limb.

This unique architecture significantly reduces user fatigue by avoiding the burden of wearing heavy active devices on the hand alone, while ensuring natural and ergonomic operation over extended sessions.

Designed for seamless integration, the platform offers high-quality interoperability and native ROS 2 drivers, enabling rapid deployment within advanced teleoperation, imitation learning, and industrial robotics environments.

About SenseGlove : https://www.senseglove.com/project-rembrandt/

How does it work?

The solution is built around the standard VIRTUOSE TAO HF device from Haption, which serves as the grounded kinesthetic force-feedback interface of the system. When combined with the SenseGlove haptic glove, it creates a seamless interoperable haptic force-feedback environment using native ROS 2 drivers. The final integration into your specific system environment, whether for robotics or virtual reality applications, can then be carried out by your team according to your project requirements and use case.

You first need to purchase a VIRTUOSE TAO HF device from Haption. This device is fully compatible with the SenseGlove haptic glove through an integration solution provided by SenseGlove.

For greater convenience, it is also possible to purchase the bundle VIRTUOSE TAO HF + its haptic Glove directly from SenseGlove.